Introducing Inquisitorial by Indianaut, a long-form newsletter where we explain and analyze important stories stemming out of the Indian entrepreneurial ecosystem & economy. New articles every Saturday & Sunday.

The way we function as a society is largely problematic. Be it the delusion of grandeur in the colonial minds that coerces an inferiority complex onto the orientalist regime, which is eventually internalised by them or the hegemonic structure of our phallocentric society wherein women occupy only a peripheral position at the margins of the society, as compared to men.

These mental frameworks not only subconsciously flow into our offsprings over generations but also build a binary culture via an uninterrupted discourse over centuries which has now smoothly seeped into our search engines as well.

A group at Microsoft found that AI learns from text files on the internet and it can learn unhealthy stereotypes. The provenance of public awareness on the issue of these biased data-sets affected by our internalised prejudice was highlighted by Journalist Claire Cain Miller of New York Times , circa 2015, the article said

“Google’s online advertising system, for instance, showed an ad for high-income jobs to men much more often than it showed the ad to women, a new study by Carnegie Mellon University researchers found. Research from Harvard University found that ads for arrest records were significantly more likely to show up on searches for distinctively black names or a historically black fraternity. The Federal Trade Commission said advertisers are able to target people who live in low-income neighborhoods with high-interest loans. Research from the University of Washington found that a Google Images search for ‘C.E.O.’ produced 11 percent women, even though 27 percent of United States chief executives are women.”

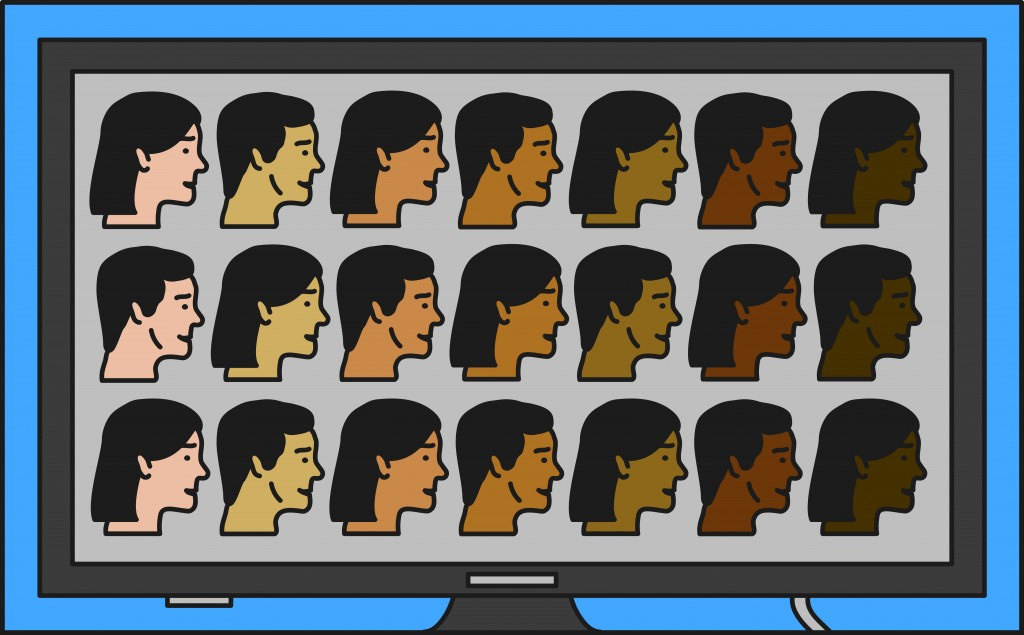

(Credits: Albert Tercero)

Democratizing AI : a Double-edged Sword?

By having an AI read text on the Internet, it can learn about words, and you can ask it to reason with analogies. An AI system stores words using a complex set of numbers. The way an AI system comes up with these numbers is through statistics of how the specific word is used on the Internet.

Recently, MIT withdrew a popular computer vision dataset as researchers found that it was rife with social bias. Racist, misogynistic, and demeaning labels among the nearly 80 million pictures in Tiny Images( a collection of 32-by-32 pixel color photos) were found. Thus MIT’s Computer Science and Artificial Intelligence Lab removed Tiny Images from its website and requested their users to delete the same from their devices. This may sound like a small issue which can be rectified sooner or later, but keeping in mind the Terabytes of data generated every second globally, this small issue might snowball into a big problem in a short timeframe.

In 2016, Microsoft AI chatbot Tay that was designed to engage in casual and playful conversation on Twitter had to be shut down within 24 hours after people started tweeting the bot with all sorts of misogynistic and racist remarks. And Tay learnt those phrases and started repeating these sentiments back to users.

These biases are intertwined so deeply in our consciousness that they eventually garner a strong foothold in our Machine Learning systems, wherein leading to a false sense of democratization. In the above example, WordNet transferred its derogatory, stereotyped, and inaccurate information onto Tiny Images, which may have further transferred the problematic data-sets to countless real-world applications.

The real culprit seems to boil down to a lack of different perspectives among the people coding these algorithms. This makes these nascent technologies vulnerable to blind spots. For instance, Google fails to code the automatic orientation of YouTube videos on smartphones for left-handed people. This may happen because they might have had only right-handed people within their team who coded this algorithm.

(Credits: Vincent Roche)

Pride and Prejudice in the AI Era

Researchers at the University College of Dublin and UnifyID, an authentication startup conducted an “ethical audit” covering several large vision datasets, each containing many millions of images. They focused on Tiny Images as an example of how social bias proliferates in machine learning. Circa 1985, psychologists and linguists at Princeton compiled a database of word relationships called WordNet. Their work has served as a cornerstone in natural language processing( NLP). Scientists at MIT CSAIL compiled Tiny Images in 2006 by searching the internet for images associated with words in WordNet. The database includes racial and gender-based slurs, so Tiny Images collected photos labeled with such terms.

Concerns over bias in AI aggravated after a generative model called Pulse converted a pixelated picture of Barack Obama, who is Black, into a high-resolution image of a white man. This led to global skepticism and might offer a biting backlash for the AI discourse.

Truth, Subjectivity and Vague Ideas of Justice

Journalism, Politics, Public policy and Legal advisory are fields which require objective decisions against large subjective data. Now that the latent existence of these biases in our data-sets are brought to fore, the idea that decisions rolled out by judicial authorities invoked with such facile ease in near future might be tainted by the seemingly “objective analysis” of these biased data-sets is a shuddering thought. The same issue arises with journalistic reporting, wherein the scribes struggle to find the right ‘frame’ for the AI algorithm to avoid social bias against the subjects. MIT graduate student Joy Buolamwini articulates the issue as the “Coded Gaze” and shares insights on the issue via a TED Talk.

In the US criminal justice system, a computer programme is being used for bail and sentencing decisions. This system is disproportionately affecting people of color as reported by Journalists Harry Armstrong and Jared Robert Keller in The Guardian,

“In the US, criminal ‘risk assessments’ based on predictive analytics have already been shown to be biased against black people because the data used to build the system was inherently biased.”

To avoid falling into this vicious cycle, we must put “ethical implications at the heart of the development of each new algorithm” as highlighted in a study by Accenture.

(Credits: ‘COMPAS Software Results’, Julia Angwin et al. (2016))

What's the Solution?

There is a dire and urgent need for systematic intervention to remove or manipulate these biases from the data ocean otherwise the chances of stepping in a Black Mirror’s dystopian world in near future does not seem too bleak.

AI practitioners and codes must broaden their horizons, since they have this innate responsibility to re-examine the ways data is collected and used. More often than not, we are not aware of biases harbouring in those datasets, but when one dataset’s bias may propagate to another, it’s necessary to track data provenance and address any problems discovered in an upstream data source.

On the consumer end, such biases reaffirm the prejudiced notion in the minds of masses thus broadening the cultural divide. Recently many proposals are made to set standards for documenting models and datasets to weed out harmful biases before they take root.

“IBM has released a suite of awareness and debiasing tools for binary classifiers under the AI Fairness project. Once bias is detected, the AI Fairness 360 library (AIF360) utilizes its 10 debiasing approaches which can be applied to models ranging from simple classifiers to deep neural networks. Other libraries, such as Aequitas and LIME, have good metrics for some more complicated models but they only detect bias, not fixing it.”

A diverse workforce will also help in reducing bias significantly. In this case, the individuals in your workforce are more likely to be able to spot different problems, and help in real time make your data more diverse and more inclusive in the first place. By having more unique points of view as you're building AI systems, there's a hope for all of us to create less biased applications.

Falguni Chaudhary is a PR specialist and communications strategist. She has previously worked as a consultant for startups in the AI, sustainable fashion, ed-tech space and was previously a contributing editor at NewsD, Youth Incorporated Magazine and Feminism in India.